Key performance indicators of Research Infrastructures / 2

Executive summary

Following the recent Competitiveness Council Conclusions, which mandate ESFRI to develop a common approach for monitoring the performance of research infrastructures (RIs), a questionnaire was sent by ERF-AISBL (Association of European-level Research Infrastructure Facilities) to the community of European RIs in order to gain a better insight into how they address (or would address as the case may be) the issue of Key Performance Indicators (KPIs). 36 replies were received, as listed in Annex 1. The results indicate a rather strong coherence with the proposed principles across the landscape of RIs.

While half of the respondents already have KPIs in place, the other half agree that they should have them. Respondents also believe that KPIs should be used in the strategic management of the institutions and, as such, adopted by, and reported to the decision-making bodies of the RIs. There is also a strong preference for their publication, although some RIs stress the importance of putting KPIs into context when making them available publicly to ensure clarity, but also because, without such contextual information, the performance cannot be reliably compared across RIs.

The quality of indicators is considered to be highly important by the RIs. Respondents believe indeed that indicators should be “relevant, accepted, credible, easy to monitor and robust”, although only three respondents report that their current KPIs already meet these criteria. The prevailing opinion of the RIs is that KPIs should be linked to the objectives of their institutions. Considering that RIs pursue some objectives that are specific to each of them, a number of respondents warn against prescribing the use of the same indicators by all RIs in a ‘top-down’ approach. Additionally, some respondents emphasize that, in addition to quantitative KPIs, attention should also be devoted to non-measurable, qualitative performance criteria and propose that KPIs be accompanied by case studies and other narratives in order to appropriately present progress in the pursuit of the objectives of their infrastructure.

Introduction

There is little doubt that publicly-funded instruments or institutions should have a performance monitoring system in place. Key performance indicators (KPIs), which describe how well an institution or a programme is achieving its objectives, play a key role in this process. They are an indispensable management tool, allowing monitoring of progress, enabling evidence-based decision-making, and aiding in the development of future strategies. They can also contribute significantly to the successful communication of results and achievements, and thus to the financial sustainability of research infrastructures (RIs), as well as to increased transparency. In addition, they play a role in the evaluation of socio-economic return.

Performance monitoring is an obligatory activity for many publicly-funded bodies and initiatives. For Horizon 2020 for example, the European Commission (EC) has a legal obligation to monitor the Programme’s implementation, report annually and disseminate the results of such monitoring. The KPIs used in the field of RIs by the EC is the number of researchers who have access to RIs thanks to the Union’s support [1].

Due to the importance of KPIs for the effective management of publicly-supported initiatives, the topic is new to many RIs. In 2013, ESFRI’s expert group on indicators published its Proposal to ESFRI on “Indicators of pan-European relevance of research infrastructures”. Such indicators were to be used as a toolkit for the evaluation of the pan-European relevance of ESFRI RIs and future RIs applying for inclusion in the ESFRI Roadmap. However, the proposal of the expert group was not taken up by the ESFRI.

Based on the responses of RIs to the public consultation on long-term sustainability of RIs [2], there appears to be a general understanding among them that monitoring should be carried out on a regular basis and that KPIs should be used in this context. However, the use of KPIs by RIs is not yet coherent, as demonstrated by a questionnaire initiated by CERIC-ERIC to which 12 of the 19 established ERICs have responded [3].

The results of this survey indicate that 2 out of the 12 ERICs that have responded do not have performance indicators in place, and that the procedures for setting them up vary, as do the number of indicators and the reporting methods. The KPIs common to most of the ERICs are “Access Units Delivered”, “Training Courses Delivered” and “Number of Publications”.

The need for improved performance monitoring was also recognised by the EC in its staff working document ‘Sustainable European Research Infrastructures, A Call for Action’, in which Action 6 proposes to ‘assess the quality and impact of the RI and its services, by developing a set of Key Performance Indicators, based on Excellence principles’ [4].

This was echoed by the Conclusions of the Conference “Research Infrastructures beyond 2020 – sustainable and effective ecosystem for science and society” organised by the Bulgarian Presidency of the EU Council [5], which indicated that ‘there is a need for systemic monitoring and impact assessment of pan-European Research Infrastructures. It should be based on a commonly agreed methodology and process to define the Key Performance Indicators, reflecting the objectives of the various RIs, and to elaborate the socio-economic impact, in order to ensure continuous update of their scientific and strategic relevance.’

Based on these inputs, the EU Competitiveness Council invited ‘Member States and the Commission within the framework of ESFRI to develop a common approach for monitoring of their performance and invited the pan-European Research Infrastructures, on a voluntary basis, to include it in their governance and explore options to support this through the use of Key Performance Indicators‘ [6].

Also based on these inputs, ERF-AISBL decided to set up a short questionnaire on the issue of KPIs, which was addressed to its Members, ERICs and/or ESFRI RIs. The questionnaire was accompanied by an Explanatory Note in order to highlight some of the issues addressed by the questionnaire.

Results of the ERF-AISBL questionnaire on Key Performance Indicators

36 replies have been received, out of which 12 from ERF members and 23 from ESFRI RIs or ERICs (two being also ERF members). Three other replies were also received. The full list is presented in Annex 1.

The results are reported here, question by question, including the comments made by respondents. These are anonymised, at the request of some RIs.

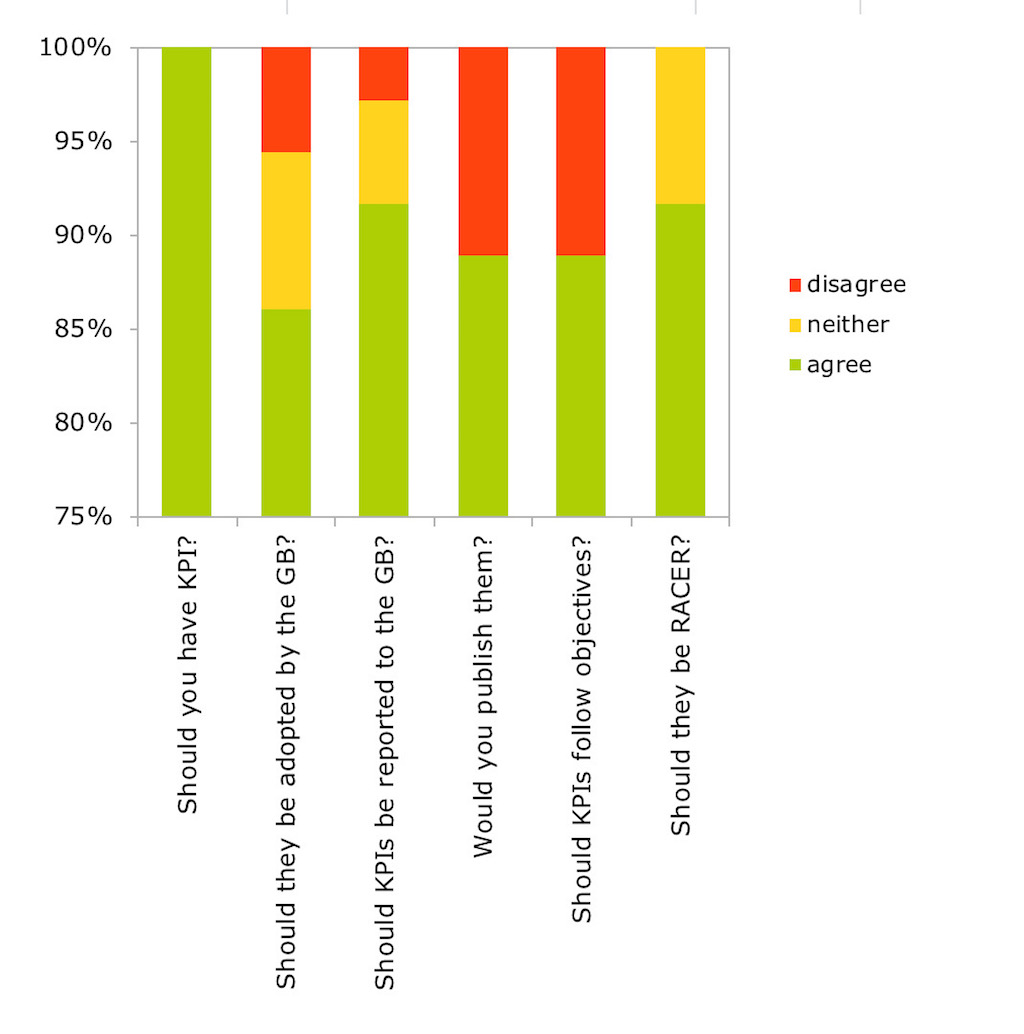

Results of the replies to questions 1-6 are presented in Figure 1.

Figure 1: Replies to questions 1-6. ‘Agree’ summarizes replies ‘already implemented’, ‘strongly agree’ and ‘agree’, while ‘disagree’ brings together replies ‘strongly disagree’ and disagree’. RACER means Relevant, Accepted, Credible for non-experts, Easy to monitor, Robust.

Q1: Do you think your RI should have Key Performance Indicators (KPI)?

The RIs who have responded are convinced of the need to have a set of KPIs to monitor performance. Half of the respondents already have KPIs in place, while the others strongly agree (30.6%) or agree (19.4%) with the statement.

Q2: Do you think that your governing body should be responsible for adopting the KPIs of your RI? & Q3: Do you think that results of the performance monitoring should be reported to the governing body of your RI on an annual basis?

The Explanatory Note accompanying the questionnaire suggested that performance monitoring should optimally be based upon a well-defined monitoring framework, adopted by the RI’s governing body, ensuring reliable and consistent collection of data. Optimally, the performance data, expressed as KPIs, should be reported by the RI’s management to the governing body on an annual basis, allowing for the development of remedial actions and the adoption of future strategic directions.

56% of the respondents strongly agree that the governing body should be responsible for adopting the KPIs, while this is already the case in 31% of them. Only one RI disagreed, while one strongly disagreed with the statement. Results were a bit less affirmative when it comes to the need to report KPIs to the Governing Board (25% strongly agree, 31% agree, 36% already have this scheme in place).

Two RIs stressed in their comments that KPIs should be defined by the RI, in consultation with its governing body / funders.

A further comment highlighted that KPIs should be key for the RI itself – it should help the RI’s management in the measuring of “success” and goal achievement. It further proposed that the decision-making body of the RI may eventually also acknowledge performance indicators, designed for the monitoring of internal processes, in order to cross-check that the executive director achieves the mission.

Q4: Would you opt for publishing KPIs in your RI’s annual report?

The Explanatory Note states that publication of the KPIs, for example in an annual report, is a tricky topic. While it certainly contributes to the increased transparency of operations and allows for comparison of RIs, the danger is that indicators, without being put in context, can easily be misinterpreted and misused, if proper attention is not paid to the relevance of the various KPIs to external stakeholders and to the necessary background information allowing their proper interpretation. A careful consideration of the possible negative effects or unintended consequences is advised before deciding for publication of the KPIs.

A quarter of the responding RIs report that they publish their KPIs, while another 64% would opt for their publication. Only 4 RIs would not.

A considerable number (6) of the RIs [7], which would opt for publication of at least a selection of the KPIs, state that they would wish to qualify them, or put some into context and one stresses that they should not be mandatory and that they cannot be used to compare performance across RIs.

The context is indeed relevant. An example given by one RI is excellence of scientific output, which can be expressed annually, among others, by the average impact factor of publications. However, since it depends on the scientific field, it may lead to erroneous conclusions when comparing different infrastructures. Even if such bibliometric analysis takes this into account and is normalised according to the scientific domain, the outcomes may vary greatly according to the strength of the scientific community using the RI. Also, where the community is relatively young and growing, expectations of strength at that moment may quite reasonably be very different from those of a more established and mature community.

As emphasized by another RI, ‘KPIs are an instrument that cannot become an absolute measurement of performance. They should be supported by an adequate narrative and show incremental trends towards successful fulfilment of the mission of RI. They must be agreed by the Governance of the RI and its stakeholders as “personalized” KPIs. They cannot become absolute numbers to make comparative analysis between different RIs.’ The respondent then recalled the negative effects induced by h-index and impact-factors on the recruitment of scientists in academia and research institutions.

Q5: Do you agree that performance indicators should be linked to the intervention logic of your RI, i.e. the objectives behind the establishment of your RI?

The Explanatory Note proposed that indicators be linked to the intervention logic, i.e., that they follow the objectives underpinning the establishment of the RI. While all pan-European RIs share the objective of enabling the production of excellent science, they also follow specific objectives, as described in their statutes. An overview of these would be needed in order to propose a list of recommended indicators.

Furthermore, the Note stated that ESFRI might consider suggesting performance indicators, that would consider the so-called results’ chain [8] of performance monitoring, i.e. a causal sequence for a development intervention that stipulates the necessary sequence to achieve desired objectives beginning with inputs, moving through activities and outputs, and culminating in outcomes, impacts, and feedback. Various ‘impact pathways’ may be developed to address different objectives of the RIs. Table 1 presents an example of a pathway addressing the objective of knowledge creation.

Table 1: One possible impact pathway addressing the objective of knowledge creation.

| Chain element | Definition | Example |

| Inputs | The financial, human, and material resources used for the development of the intervention. | Financial resources per year |

| Activities | Actions taken or work performed through which inputs, such as funds, technical assistance and other types of resources, are mobilised to produce specific outputs. | Number of open calls per year |

| Outputs | The products, capital goods, and services that result from a development intervention; may also include changes resulting from the intervention that are relevant to the achievement of outcomes. | Number of researchers who have access to research infrastructures |

| Outcomes | The likely or achieved short-term and medium-term effects of an intervention’s outputs. | Number of publications, average impact factor of publications or similar |

| Impact | Positive and negative, primary and secondary long-term effects produced by a development intervention, directly or indirectly, intended or unintended. | Number and share of peer reviewed publications based on the research supported by the RI that are core contribution to scientific fields* |

*Adapted proposed long-term scientific pathway indicator of Horizon Europe

The development of a socio-economic impact model is the objective of the RI-PATHS project, co-funded by the EC [9]. The currently running ACCELERATE project [10], co-funded by the EC, is another initiative including as deliverables the development of a methodology for the assessment of societal return and societal return reports from the project partners. In parallel, the Global Science Forum of the OECD is investigating the existing demands and remaining challenges regarding socio-economic impact assessment methodologies and tools [11]. Impact assessments are often assisted by expert assessment, taking into consideration quantitative indicators as well as narratives.

Feedback received through the questionnaire demonstrates that there is strong agreement that KPIs should be linked to the objectives of the RIs. 28% of the respondents have this in place already, with further 67% strongly agreeing that this should be the case.

In their comments, the RIs have stressed that KPIs should be designed according to the RI’s main mission (1) / should be mainly related to the intervention logic and should be specific and tuned to the characteristics of the RIs (1). One RI proposed that a set of KPIs (~5) common to all RIs could be defined, with each RI defining further KPIs specific to each of them. Several others (3) pointed that the KPIs should primarily be relevant for their own research infrastructure, rather than conform to a pre-defined set of standardized KPIs applied to all. As pointed out by one respondent, ‘someone at some point may wish to suggest a core set of KPIs that could be adopted by most if not all RIs’, and ‘warns against pitfalls of blind use of certain KPIs by all RIs. For example, publication citations – while tempting at first sight need to be introduced in a manner that takes account of the scientific domain (for which the Mean Normalised Citation Score – MNCS is more meaningful and useful than simple citation statistics) and reflect the average for the geographic region (to provide some idea of the added value of the RI). By defining such KPIs more carefully one could draw attention to the danger of more careless use of KPIs.’ Attention should also be devoted to non-measurable, qualitative performance criteria (3). In fact, one RI reports that they also provide case studies and other narrative forms of expression of performance in their reports and assessments. RIs have also indicated that performance indicators should not be used only for annual monitoring and reporting but should also enter into (daily) managerial practice (3). This was detailed by one RI, who uses the following indicators:

- EU project-specific indicators (i.e. for the EU grant, which ensure the project is on track. These are not public but are reported back to the EC during reporting periods);

- Programme-related (number of Member States that have joined, number of Collaboration Agreements signed, number of Service Delivery Plans concluded, number of Commissioned Service contracts, length of time that it takes to sign each contract, etc.);

- Strategy-related (specific KPIs and metrics for Communications Strategy, International Strategy, Industry Strategy, which are all specific to each strategy and allow us to benchmark against other RIs);

- Longer-term socio-economic impact (currently under consideration and development).

Regarding impact indicators, the difficulty in measuring impact level indicators was recognised (1). In fact, the recently published Impact Assessment Report by ICOS RI (Integrated Carbon Observatory System), the repetition of which is aimed to take place in 5 years, contracted a specialised consulting firm for the purpose. Another RI, ESS, references the OECD framework for its socio-economic impact, but has not provided further details.

Q6: Do you agree with the proposed KPIs guiding principles? (Relevant, Accepted, Credible for non-experts, Easy to monitor, Robust)

The Explanatory Note proposed that KPIs should fulfil the RACER criteria [12]:

Relevant – i.e. closely linked to the objectives to be achieved

Accepted – e.g. by staff and stakeholders

Credible for non-experts, unambiguous and easy to interpret

Easy to monitor – e.g. data collection should be possible at low cost

Robust – e.g. against manipulation

While it is highly recommended that the proposed indicators comply with these requirements, it is also essential that the scale of the monitoring system remain reasonable and compatible with the administrative capacity of each organisation. Therefore, a limited number of indicators, which allow for progress monitoring against a variety of objectives, seems advisable.

In replies to the questionnaire, the proposed criteria were strongly supported. While only 3 RIs report that their KPIs already follow the RACER criteria, further 83% either strongly agree or agree, that they should.

Q7: How many KPIs do you use / think should be used to monitor the performance of your RI?

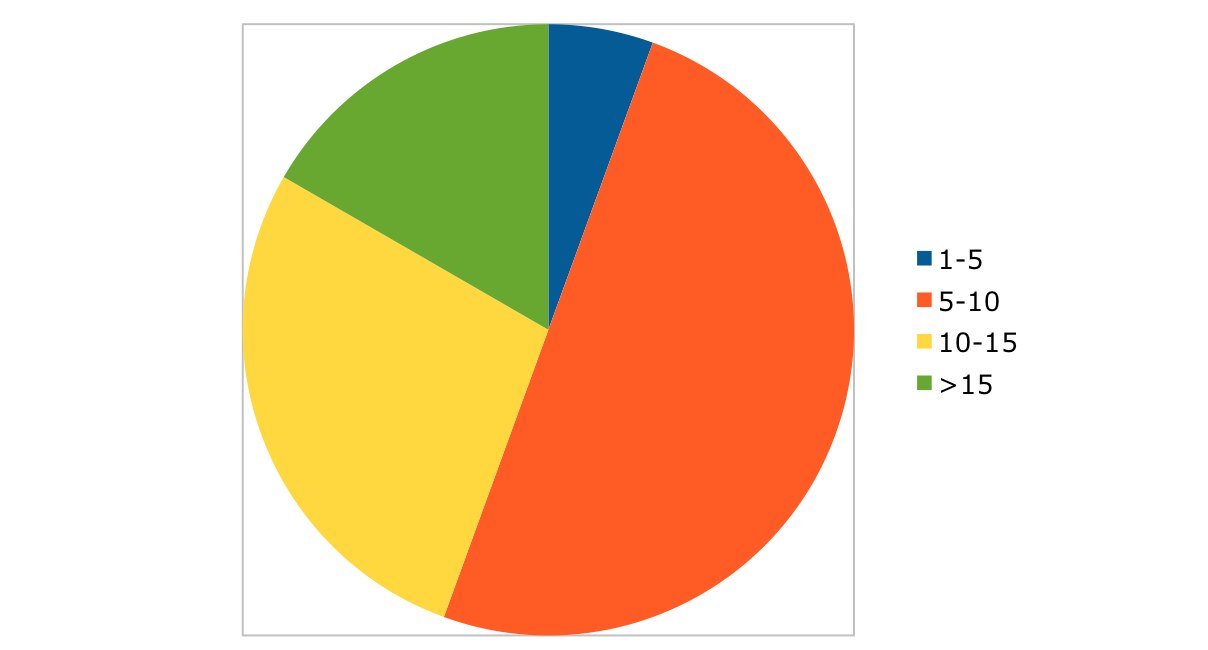

Replies to this question are summarized in Figure 2. Most RIs use 5-10 KPIs, while a significant number (6) of them have more than 15. As commented by one RI, this may seem a lot, but it should be noted that some are much more important or influential than others. In fact, if progress towards the objectives is monitored following the pathway indicators (Table 1), the expected number is well over 15. While some (e.g. number of open calls per year) are easy to implement, some others require more effort.

Figure 2: Number of KPIs used by the responding RIs. Numbers represent number of responses out of a total of 36.

In addition to the KPIs, one RI stressed that there should also be mechanisms to assess high-level risks to the RI and take steps to mitigate these. This can be done through a risk register, for example.

Conclusion

While the recent Council Conclusions underlined the importance of performance management, care needs to be given in designing and implementing it correctly. One should consider the principles behind performance management and respect the balance between its objectives and the administrative burden it can impose on the staff. Implemented in such a balanced way, KPIs can significantly contribute to the long-term sustainability of a research infrastructure, as funders base their investment decisions also on the results and impacts of the initiatives they fund, and such data can help RI managers deliver what is expected from them. Furthermore, providing quality data on the performance of RIs not only contributes to the notion that they have a credible, output-oriented management in place, but also provides assurance that the various objectives that supported their establishment are indeed effectively pursued.

This note was prepared by Jana Kolar (CERIC-ERIC), Andrew Harrison (Diamond Light Source Ltd) and Florian Gliksohn (ELI-DC) for ERF. CERIC-ERIC was supported by the ACCELERATE project, funded by the European Union Framework Programme for Research and Innovation Horizon 2020, under grant agreement 731112.

The authors would like to sincerely thank the participating research infrastructures for their invaluable input.

-

02.04.2026

CERIC Newsletter | April 2026