Key performance indicators of Research Infrastructures / 1

by Jana Kolar, CERIC-ERIC

Andrew Harrison, Diamond Light Source Ltd

Florian Gliksohn, ELI-DC

There is little doubt that publicly funded instruments or institutions should have a performance monitoring system in place. Key performance indicators (KPIs), which describe how well an institution or a programme is achieving its objectives, play a key role in this process. They are an indispensable management tool, allowing monitoring of progress, enabling evidence-based decision-making, and aiding in the development of future strategies. They can also significantly contribute to the successful communication of results and achievements, and thus to the financial sustainability of institutions, as well as to increased transparency. In addition, they play a role in the evaluation of socio-economic return.

Due to its importance, performance monitoring is an obligatory activity for many publicly-funded bodies and initiatives. For Horizon 2020 for example, the European Commission has a legal obligation to monitor the Programme’s implementation, report annually and disseminate the results of such monitoring. The KPI used in the field of research infrastructures (RIs) by the European Commission is the number of researchers who have access to research infrastructures thanks to the Union’s support [1].

Due to the importance of KPIs for the effective management of publicly-supported initiatives, the topic is not new to many RIs. In 2013, ESFRI’s expert group on indicators published its Proposal to ESFRI on “INDICATORS OF PAN-EUROPEAN RELEVANCE OF RESEARCH INFRASTRUCTURES” [2] Such indicators were to be used as a tool-kit for the evaluation of the pan-European relevance of ESFRI RIs and future RIs applying for inclusion in the ESFRI roadmap. However, the proposal was not taken up by the RIs.

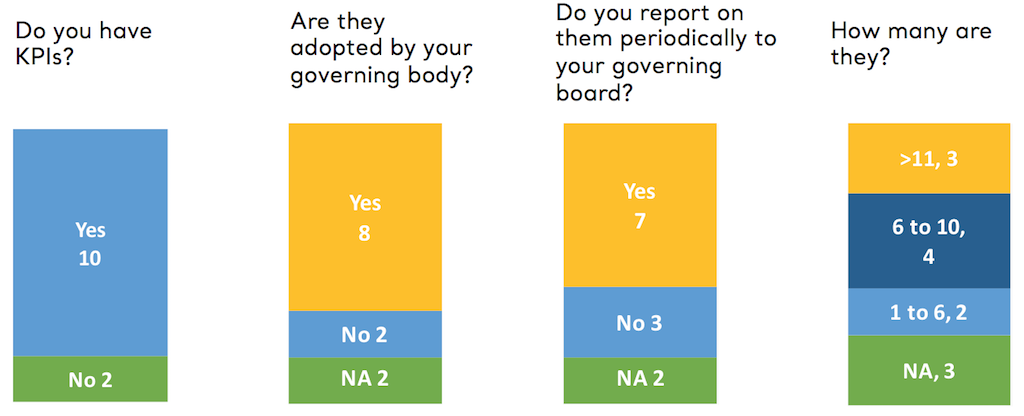

Based on the responses of RIs to the public consultation on long-term sustainability of RIs [3], there appears to be a general understanding among them that monitoring should be carried out on a regular basis and that KPIs should be used in this context. However, the use of KPIs by RIs is not yet systematic, as shown in 2018 by a questionnaire initiated by CERIC-ERIC on the topic to which 12 of the 19 established ERICs have responded [4] (see Figure 1).

Figure 1: Replies of 12 ERICs to the Questionnaire about KPIs [5].

Figure 1: Replies of 12 ERICs to the Questionnaire about KPIs [5].

The result of this survey indicates that two out of twelve ERICs do not have performance indicators in place, and that the procedures for setting it up vary, as do the number of indicators and the reporting methods. The KPIs common to most of the ERICs are Access Units Delivered, Training Courses Delivered and Publications.

The need for improved performance monitoring was also recognised by the European Commission in its staff working document ‘Sustainable European Research Infrastructures, A Call for Action’, in which action 6 proposes to ‘assess the quality and impact of the RI and its services, by developing a set of Key Performance Indicators, based on Excellence principles.’ [6]

This was echoed by the Conference Conclusions of the Bulgarian Presidency of the EU Council Conference Research Infrastructures beyond 2020 – sustainable and effective ecosystem for science and society [7], which indicated that ‘there is a need for systemic monitoring and impact assessment of pan-European Research Infrastructures. It should be based on a commonly agreed methodology and process to define the Key Performance Indicators, reflecting the objectives of the various RIs, and to elaborate the socio-economic impact, in order to ensure continuous update of their scientific and strategic relevance.’

Based on these inputs, the EU Competitiveness Council invited ‘Member States and the Commission within the framework of ESFRI to develop a common approach for monitoring of their performance and invited the Pan-European Research Infrastructures, on a voluntary basis, to include it in their governance and explore options to support this through the use of Key Performance Indicators’ [8].

In the following, a possible approach on how to implement the decision of the Council of Ministers is presented to ESFRI. It consists of a process and some of the desired properties of the KPIs, without elaborating on them.

PROCESS

Performance monitoring should optimally be based upon a well-defined monitoring framework, adopted by the RI’s governing body, ensuring reliable and consistent collection of data. Optimally, the performance data, expressed as KPIs, should be reported by the RI’s management to the governing body on an annual basis, allowing for the development of remedial actions and the elaboration of future strategic directions. Publication of the KPIs, for example in annual report, is trickier. While it certainly contributes to the increased transparency of operations and allows for comparison of RIs, the danger is that indicators, without the context, can easily be misinterpreted and misused, if proper attention is not put on the relevance of the various KPIs to external stakeholders and to the necessary background information allowing their proper interpretation. A careful consideration of the possible negative effects is advised before deciding for publication of the KPIs.

KEY PERFORMANCE INDICATORS

Firstly, the proposed indicators should be linked to the intervention logic, i.e., should follow the objectives behind the establishment of the RI. While all pan-European RIs share the objective of enabling the production of excellent science, they also follow specific objectives, as described in their Statutes. An overview of these would be needed in order to propose a list of recommended indicators.

In addition, ESFRI might consider suggesting performance framework, that would cover the so-called results’ chain [9]:

| Chain element | Definition | Example |

| Inputs | The financial, human, and material resources used for the development of the intervention. | Financial resources per year |

| Activities | Actions taken or work performed through which inputs, such as funds, technical assistance and other types of resources, are mobilised to produce specific outputs | Number of open calls per year |

| Outputs | The products, capital goods, and services that result from a development intervention; may also include changes resulting from the intervention that are relevant to the achievement of outcomes. | Number of researchers who have access to research infrastructures |

| Outcomes | The likely or achieved short-term and medium-term effects of an intervention’s outputs. | Average impact factor of publications |

| Impact* | Positive and negative, primary and secondary long-term effects produced by a development intervention, directly or indirectly, intended or unintended. | Number and share of peer reviewed publications based on the research supported by the RI that are core contribution to scientific fields** |

* Development of a socio-economic impact model is the objective of the RI-PATHS project [10] Impact assessment is often based on evaluations, where impact indicators are complemented by narrative evidence.

** Adapted proposed long-term scientific pathway indicator of Horizon Europe [11]

To ensure effectiveness and implementation, the proposed KPIs should be:

- Relevant – i.e. closely linked to the objectives to be achieved

- Accepted – e.g. by staff and stakeholders

- Credible for non-experts, unambiguous and easy to interpret

- Easy to monitor – e.g. data collection should be possible at low cost

- Robust – e.g. against manipulation

While it is highly recommended that the proposed indicators comply with these requirements, it is also essential that the scale of the monitoring system remains reasonable and compatible with the administrative capacity of each organisation. A limited number of indicators, which allow for monitoring of progress against a variety of objectives, therefore seems advisable.

To conclude – while the recent Council Conclusion underlined the importance of performance management, care needs to be given in designing and implementing it correctly. One should take into account the principles behind performance management and respect the balance between its objectives and the administrative burden it can impose on the staff. Implemented in such a way, it can significantly contribute to the long-term sustainability of a research infrastructure, as our funders base their investment decisions also on the results and impacts of the initiatives they fund. Providing quality data on the performance of the institutions not only contributes to the notion that it has a credible, output-oriented management in place, but also provides assurance, that the various objectives are indeed addressed.

-

02.04.2026

CERIC Newsletter | April 2026